The purpose of a Pixel Shader is to control the color of a rendered pixel.

Read this if you are unfamiliar with the topic.

I've taken interest in writing a 2D lighting Shader.

Lighting depends on many variables.

But as a massive simplification it depends on 2.

Surface direction (normal) and light source position:

In 3D art getting a normal is easy.

The data is already there as it is essential to how it is rendered.

But 2D art is different.

Altho we can find the normal of certain pixels, like the outer edges, there is no "physical" axis.

Surface normals are created by the artist and kept consistent only by choice.

It's exactly this sort of constructed fakeness that keeps me interested in art.

My purpose is not to replace per pixel artistic decisions of color.

It is to add another layer of control to a sprite that is interactive in a game engine.

We can represent direction as a color.

This is commonly thought of as Normal Mapping.

We can use an extra map to light a 2D image.

So first I had to figure out what kind of axis to pull data from.

I started with a 2-axis format:

This had some appealing results (which are lost, forgot to take screenshots), but I felt the control was a little off.

Then I tried using a quadrant method using the Blue channel to determine quadrant.

The idea was to make it easier to find direction.

But I found the setup annoying to work with and abandoned it pretty quickly.

Having a third access could remove the need for quadrants.

My first thought was to use this 3-axis setup:

It reminds me of

Barycentric Coordinates and therefore has some interesting properties.

It could have worked but I tried to look for other answers.

Then I came to this 3-axis setup:

It has direction and matches how we traditionally think of a 3D axis making it easier to think about and use.

This is what I'm using now, and it works well.

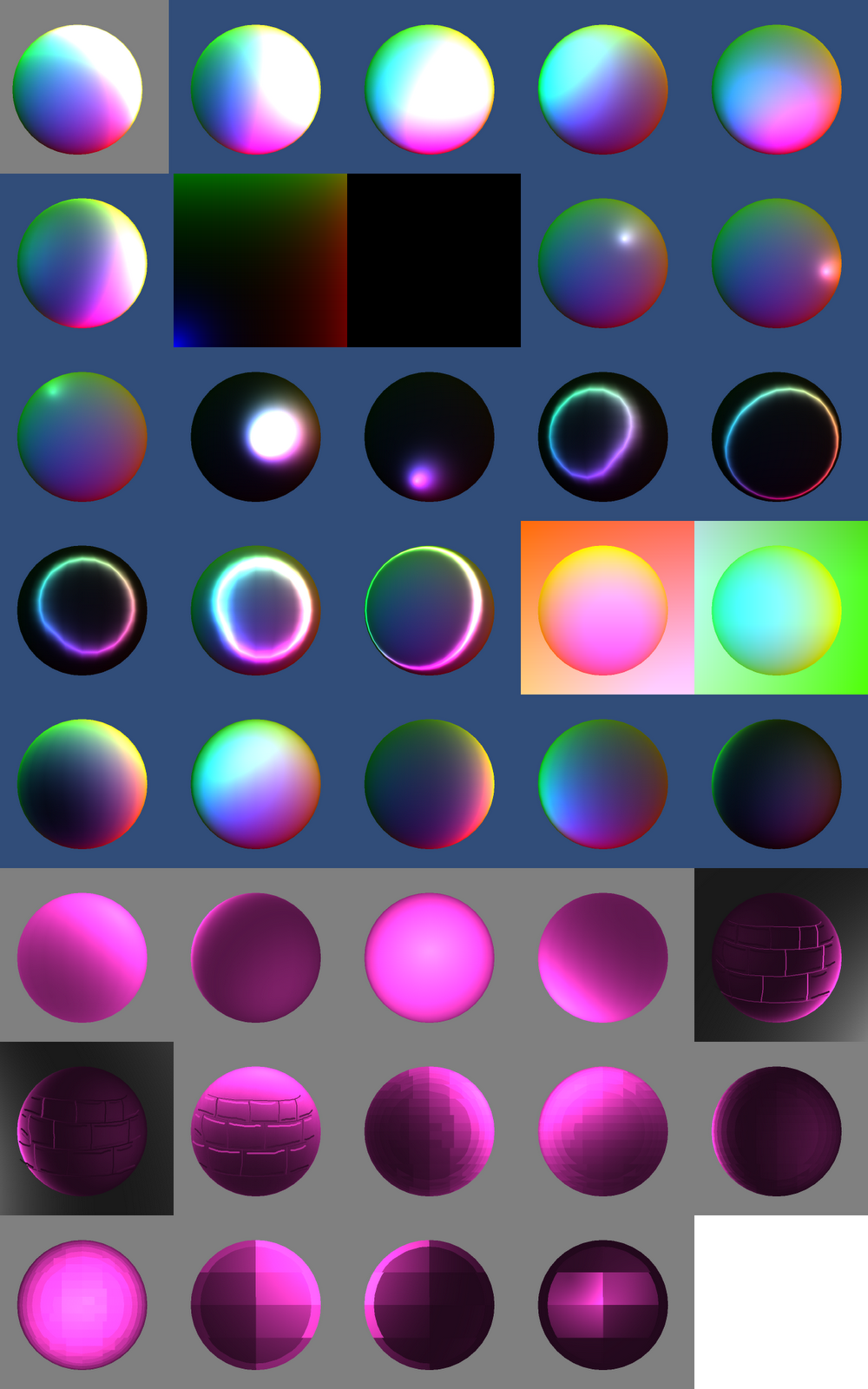

So here's the progression of getting the base code to work.

I'm writing this from scratch and tweaking it to my needs.

The light source was from the mouse position so I could move it around and see changes quickly.

Old stuff and mistakes towards the top, new stuff and properly working towards the bottom.

The idea is to draw a normal map by hand.

Reducing the number of colors makes surface direction more apparent.

It's easier to grab from and stay consistent.

Manually drawing the normal map, such as the "brick" pattern on the sphere, in theory seems crazy.

But it ends up having really interesting and appealing results despite the fact that its a normal map defined with solid color lines.

Here's some more test results using a sprite and 3 different types of normal map:

Using multiple spheres for normals creates a crapload of distortion, but it's quick and easy to setup.

The last row is starting to actually look decent, even though it uses the multi-sphere normal map.

It's a bit too subtle but its also much more noticeable and cool when it happens in realtime and changes based on position.

Manually painting the normals has much more potential (and is my original goal of coding this) but I have not yet taken the time to make a really nice map.

That'll be my next test, probably on something static.

Obviously it's less expensive to use on static assets but is still doable for animations.

There's still a lot of work to do.

Even if you're not a programmer feel free to comment.

It could lead to a much better shader or other features.